Decision Intelligence: A Better Way to Tackle Wicked Problems

Complex policy decisions often fail not from lack of effort or data, but because the frameworks used to make them are mismatched to the problems they're trying to solve. Wicked problems, which show up across conservation ecology and the broader public policy sector, are a prime example. These are complex system problems where information is confusing, stakeholders have conflicting values, and the ramifications ripple unpredictably through entire systems. Decision intelligence (DI) is an approach designed specifically for this challenge, one that can model both the complexity of these systems and the uncertainty of our assumptions and predictions within them while also offering an approachable method that can be learned by non-experts, and which includes stakeholders as well as diverse experts. DI’s human-in-the-loop nature, integrating people, process, and technology, makes it a powerful framework for high-impact decisions that can adapt as conditions change.

Is Decision Intelligence Just Another Buzzword?

Fair question. Pratt and Malcolm[1] define it this way: "DI is a methodology and set of processes and technologies for making better, more evidence-based decisions by helping decision makers understand how the actions they take today can affect their desired outcomes in the future." More specifically, DI is a causal approach to modeling complex decisions: applying data, machine learning, simulation, and optimization alongside human judgment to drive desired outcomes.

DI builds on established frameworks like Multi-Criteria Decision Analysis (MCDA), Structured Decision Making (SDM), and Systematic Conservation Planning (SCP).[2] It doesn't replace these approaches; it starts from the same decision science foundations. Where DI distinguishes itself is in four areas:

Causal modeling of complex decisions;

The simplicity of its approach: DI is designed to match the natural mental models of decision makers – unlike other disciplines you don’t have a big learning curve before doing it;

The use of technology alongside human judgment to make repeated decisions with better outcomes than either approach alone;

The ability to generate dynamic learning loops such that decisions today drive insights about how to make better decisions tomorrow.

Why Starting with Data Is the Wrong Move

Most decision frameworks, even sophisticated ones, begin with available data or a predictive model, then reason toward outcomes. DI inverts this. It begins by identifying the outcomes and goals first, then "backs into" the data and models needed to achieve them. This distinction matters more than it might seem, because the data-first approach sets up three compounding problems:

Problem 1: Correlation isn't causation. Most data analytics and machine learning models are correlative. Approaches that begin with correlation capture at best a subset of system dynamics and often miss outcomes entirely, including ones with large and contradictory impacts.

Problem 2: Sparse data constrains decisions. In complex systems, complete data is rare. And even when there is a lot of data available, it is often “stale”: representing patterns in systems that have changed. Starting with limited data means making decisions limited to what you happen to have on hand. Decision Intelligence supports starting with building a decision model before any data is gathered, and those models provide substantial value without any data at all.

Problem 3: More data isn't always better. This is the flip side of Problem 2. Evidence-based decision making sometimes attempts to throw all available data at a decision, whether relevant or not. Without knowing which data matters to the desired outcomes, the result is wasted time and money. DI sidesteps this by beginning with the end in mind.

How DI Actually Works

Decision intelligence begins by assembling the right stakeholders: subject matter experts, community members, business representatives, government officials, and others both making and affected by the decision. Once assembled, the group identifies the objective question: the overarching decision to be made.

From there, stakeholders define outcomes (what downstream impacts are we trying to achieve, and how will we measure them?) and goals (what specific levels of each outcome constitute success?). For example, "What is the most effective approach to rehabilitate this ecosystem to increase biodiversity as measured by the Shannon diversity index?" seems clear - but "effective" might mean fastest, lowest-cost, easiest to implement, or some combination. The stakeholder group must make this explicit and quantify what winning looks like.

Next, DI identifies three additional elements:

Levers: what controls can be changed to drive desired outcomes? Another name for levers are “choices of actions to take”.

Externals: what might affect outcomes but lies outside our control?

Intermediates: things you can measure that are the direct result of actions, but precede the outcome.

Intermediates are a key differentiator. By explicitly mapping the causal chains that connect actions to outcomes, and the feedback loops between components, DI builds a mechanistic picture of the system rather than a correlative one.

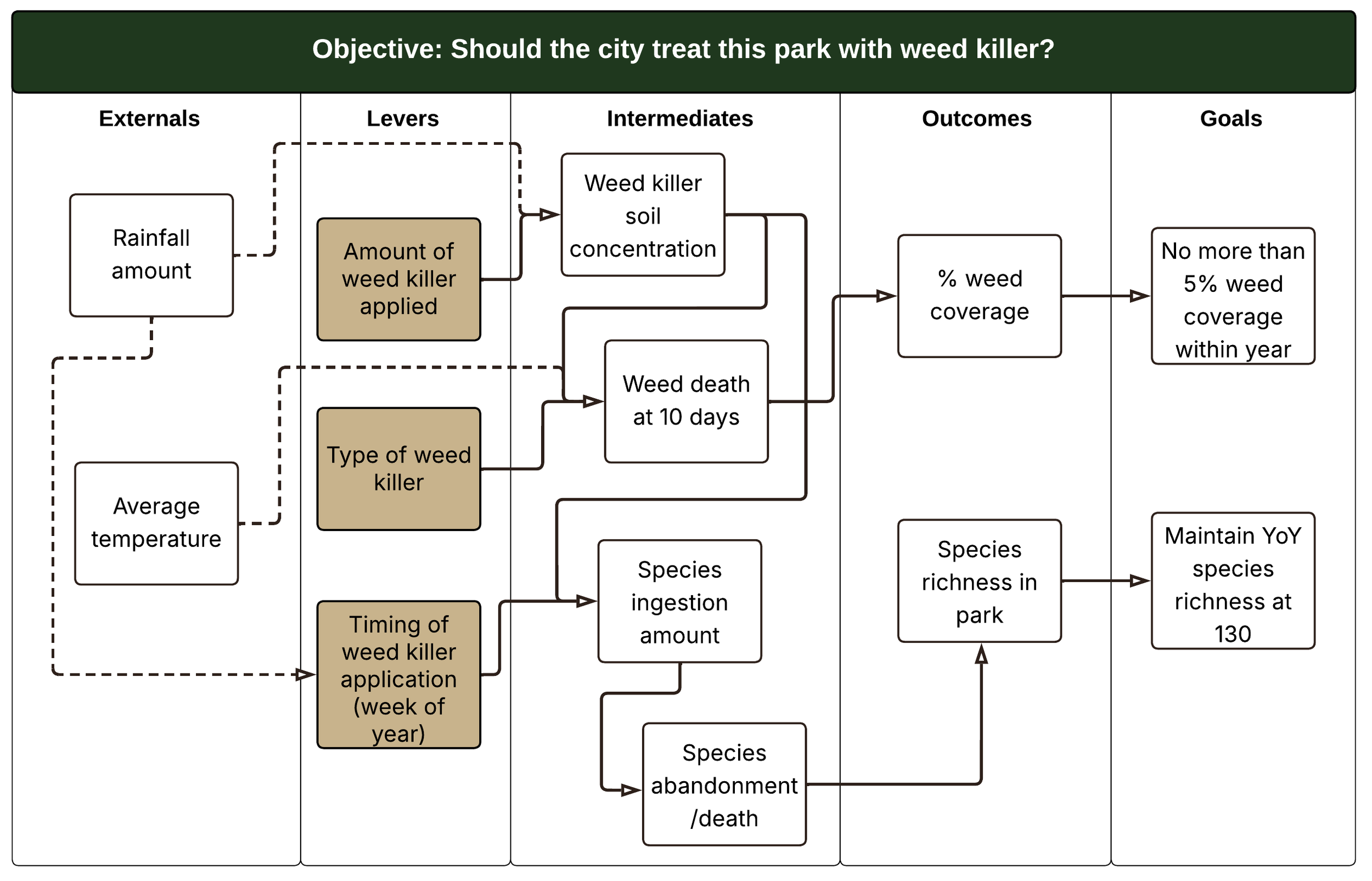

The Causal Decision Diagram

All of these elements are captured visually in a Causal Decision Diagram (CDD). The objective question sits at the top; arrows form causal chains connecting externals, levers, intermediates, outcomes, and goals. Note that many people look at a CDD and think, “this is familiar, it’s just a workflow diagram” (or flow chart, or data flow diagram). It’s not. The CDD shows the why of the decision to take the actions represented by the levers: their consequences, not later things that will be done.

Figure 1: An example Causal Decision Diagram. The objective question is shown at the top, and arrows form causal chains between externals, levers, intermediates, outcomes, and goals.

CDDs are pivotal to the DI approach for several reasons:

They make explicit the assumptions stakeholders hold about how levers connect to outcomes;

They act as a scaffold for simulations to explore system dynamics and the uncertainty around them;

They surface which causal chains have strong data support and which are essentially guesses;

They allow stakeholders to encode their value judgments about outcomes, revealing where different stakeholders might respond very differently to the same decision.

Once a CDD is in place, the decision workflow is built around it. Simple simulations come first: if I change this lever by 20%, what happens to this outcome? Does it behave as expected? Sometimes the results surprise stakeholders, revealing that a seemingly small change has outsized effects on the system. The simulations also identify where additional data or modeling would most reduce uncertainty. As one example: "if we knew more about how beaver dams affect soil hydrology, we might be able to predict flooding potential with much smaller uncertainty."

Learning Gets Built In

What the eventual decision system looks like depends on the CDD and the technical depth required. Sometimes a CDD in diagram form alone creates tremendous value, even without any technology. Other times, a full technology product with a user interface surfacing real-time recommendations is needed for robust, repeated decision making.

The most powerful feature of a mature DI system is captured in the statistician's maxim: "all models are wrong, but some are useful." By integrating technology within a human-centered approach, DI enables accurate measurement not just of outcomes, but of the intermediates along each causal chain. Humans remain in the loop because these are socio-ecological systems, shaped as much by human behavior as by natural dynamics. Measuring both allows the system to improve over time. Decisions are recorded alongside their impacts; models adjust. The next recommended decision may differ from the last, informed by everything learned since.

What Comes Next

Hopefully the power of decision intelligence in the public policy space is beginning to take shape, especially for problems that are wicked, stakeholder groups that are diverse, and data that is sometimes sparse. In the next post, I'll walk through a full CDD in the sustainability space to show how DI can be applied in practice. Later posts will explore case studies in water resource management, carbon policy, and marine conservation.

References and Further Reading:

L. Y. Pratt and N. E. Malcolm, The Decision Intelligence Handbook: Practical Steps for Evidence-Based Decisions in a Complex World (O’Reilly Media, 2023).

Mark W. Schwartz et al., “Decision Support Frameworks and Tools for Conservation,” Conservation Letters 11, no. 2 (2018): e12385, https://doi.org/10.1111/conl.12385.